Running Unraid? What's your back up plan?

So, what happens if the unthinkable happens? This got me seriously thinking... How do I avoid this in the future and prevent further data losses?

Unraid is a fantastic implementation to use as a NAS solution, virtualisation platform and docker applications. It really can be a jack of all trades!

But if you're like me you throw on plenty of your own documents, personal files, photos, music, disk images and not to mention your ever-growing Plex library.

So, what happens if the unthinkable happens? One of your disks fail? Sure, Unraid is very resilient with disks failing and can easily rebuild data from a parity disk. Unraid USB drive fails? That's nasty. Wormable ransomware through SMB? Big ouch. Or in my case, a dual parity failure with a data drive being lost due to a bad RAID controller.

Now I can probably say that if you're running Unraid... It may be best to avoid using RAID controllers to handle physical disk volumes. But sometimes if you're running server hardware with SCSI you might be limited to that option, especially if you're unable to use HBA Mode for your disks. If you're wanting to "passthrough" a disk, your only option is RAID 0. YIKES!

This got me seriously thinking... How do I avoid this in the future and prevent further data losses?

A second warm/cold storage solution!

The backup server

Now for myself I have a second server I originally used for Unraid but the lack of CPU grunt certainly reached its limit when it came to docker and VMs. The server has 16TB of storage so this should make easy pickings for data backup.

Fortunately, I still have a Unraid Basic Licensed USB, so off we went to throw this back into the previous server. However, I'm sure any Linux distribution can also handle this should you not have a license. For sake of the situation, I'll be focusing on Unraid.

My solution was to use something dead simple and easy to maintain/configure. Rsync!

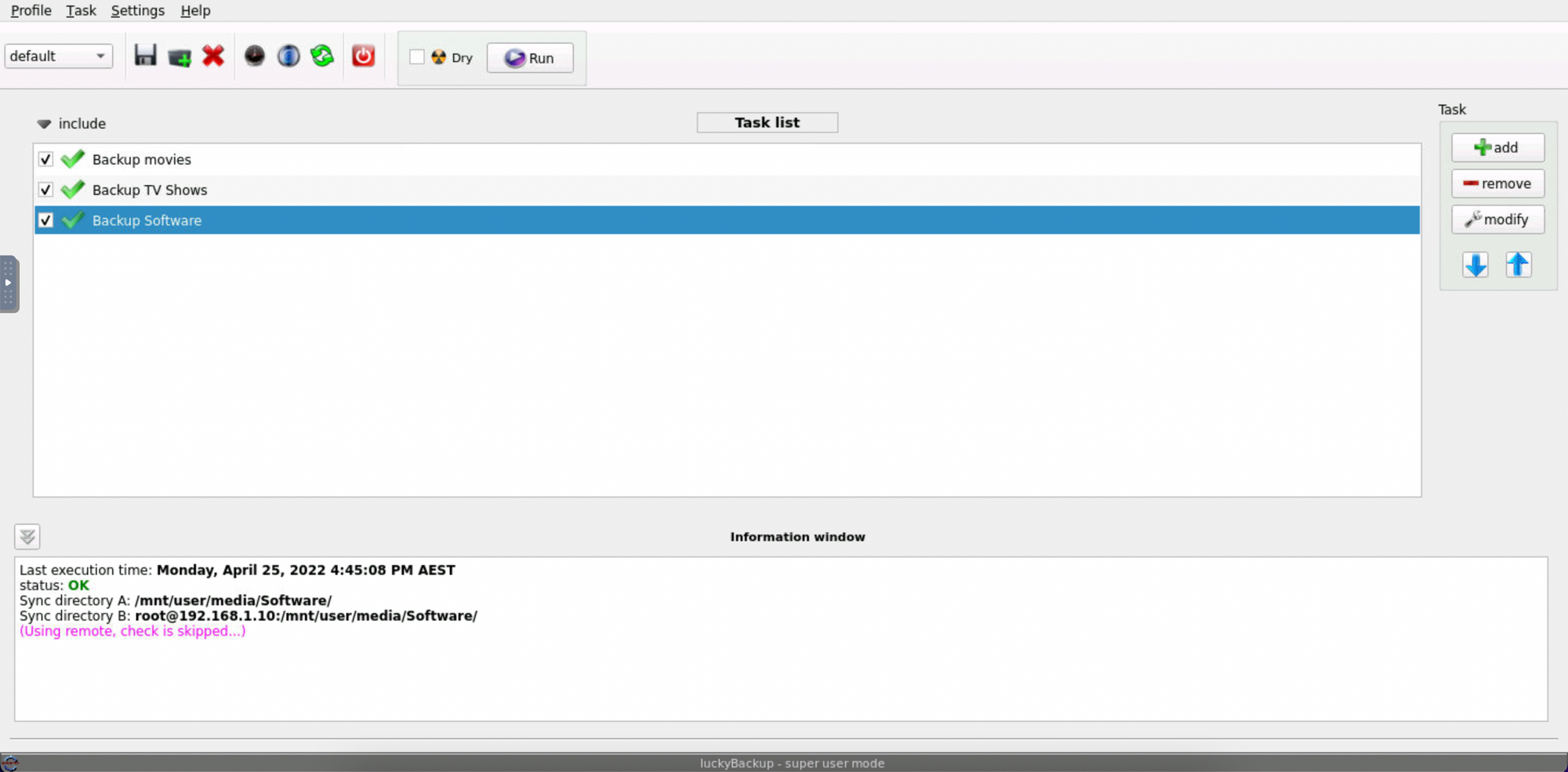

Now since we're being extra lazy here we can look at a tool called luckyBackup. luckyBackup is a GUI application that does all the background tooling in scripts and cron jobs for you. For our Unraid instance we have this present as an application on the Community Apps page to install.

Now to avoid this being a lengthy post you can find the instructions for the setup here on SPX Labs blog. Backup your Unraid Server with LuckyBackup — SPX Labs

I've also linked a video on the steps for the visual learners:

Other Linux distros can also do this, or if you want to DIY, you can via your own rsync bash scripts and cron jobs.

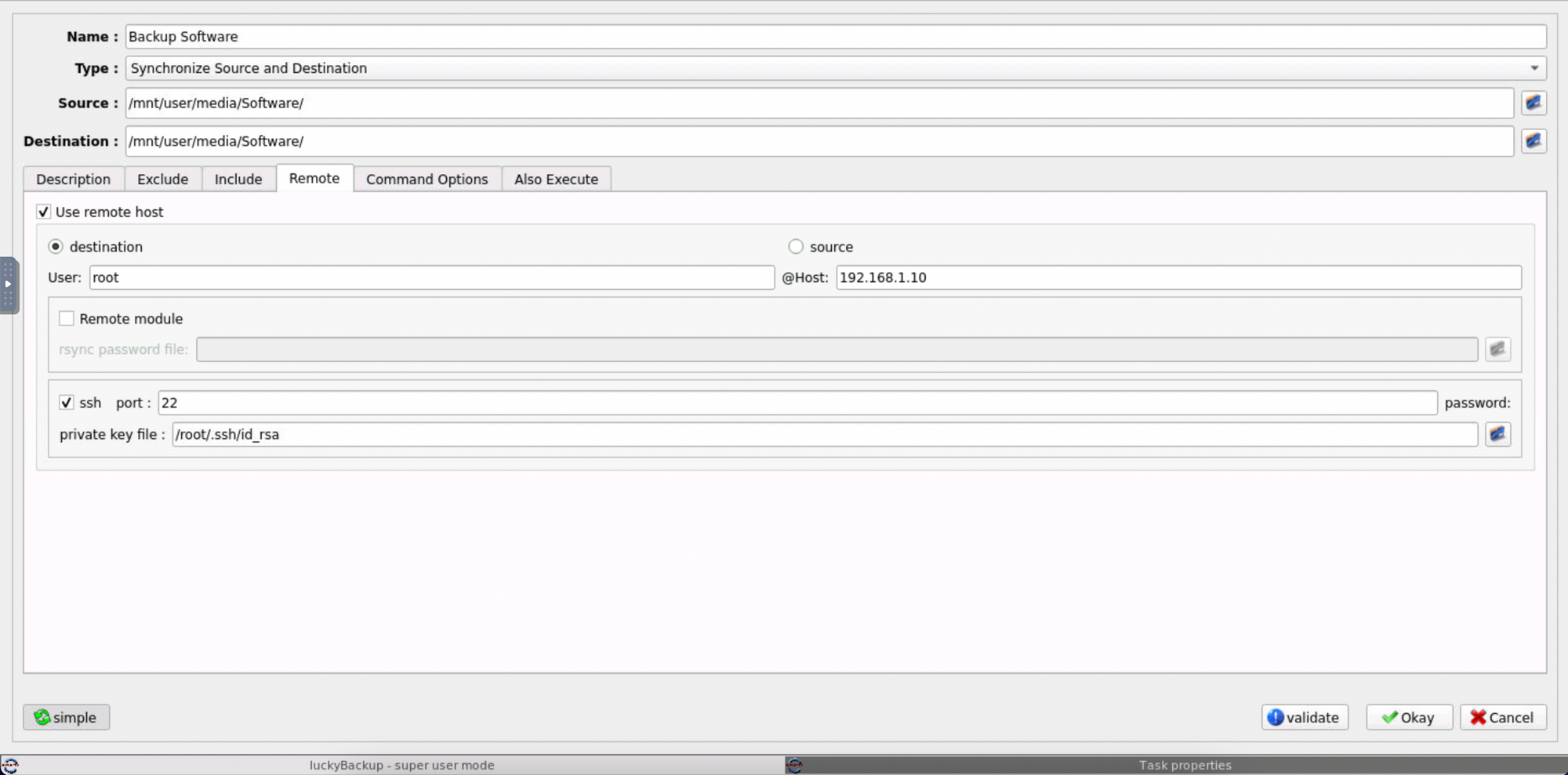

Once I got luckyBackup setup I went through the folders I wanted rsync to run on and pointed it to my second Unraid server over ssh. Dead easy! Note I'm using root in this example, I recommend creating another user with the correct permissions and limiting the access to certain wheel groups for better security practice.

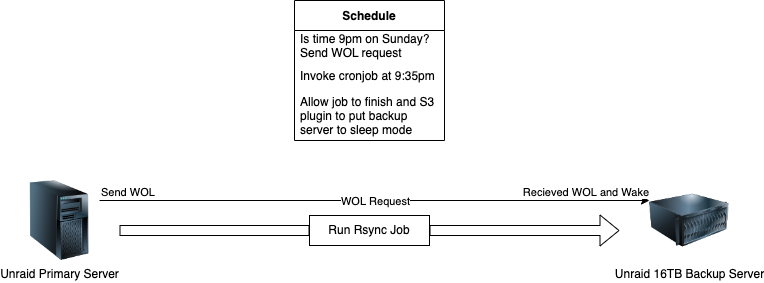

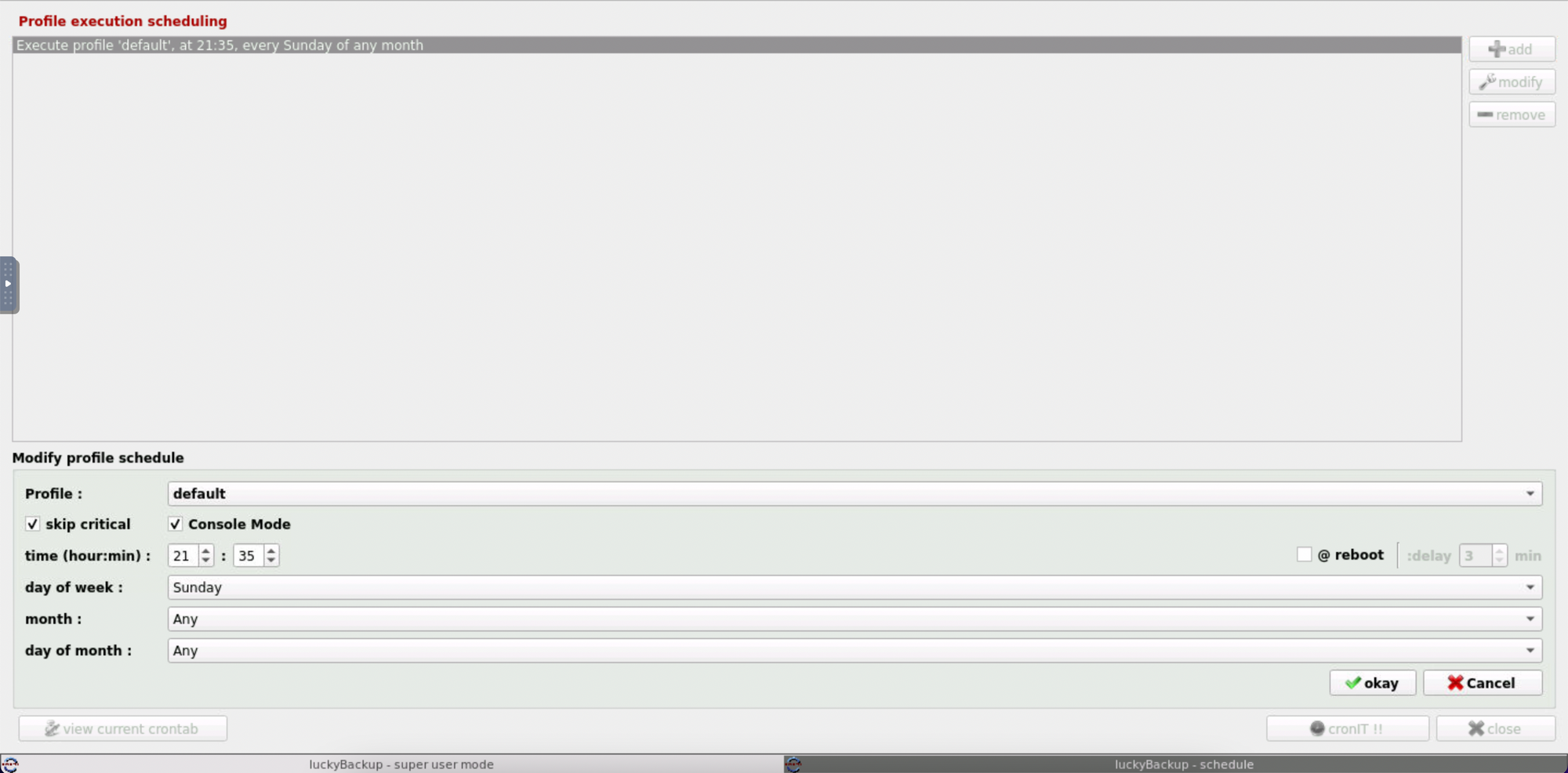

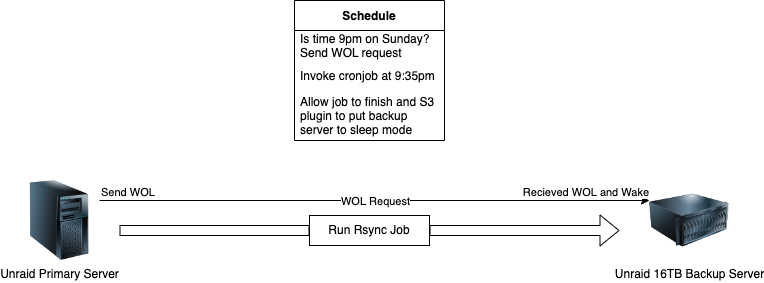

Now... how do we automate this? Well, our first step is that luckyBackup has a scheduling feature to add the script to the crontab. In this example I'm running this at 9:35pm on a Sunday every week.

The last step is to use the S3 Sleep solution plugin to keep the backup server in sleep mode and to utilise a wake-on-lan function to wake it up at 9:30pm (giving 5 minutes to spare for anything else). This will bring up the server from sleep and allow our primary Unraid server to connect to it and run the rsync jobs.

Once completed the server will sense no sizeable disk activity is occurring (expected after rsync is complete) and throw the server back into sleep! Now that's what I call a lazy and simple solution!

If you're running another Linux distro, you could also configure the sleep settings under your power options, or if you're feeling different add a one liner on the end of your rsync script with the shutdown now command. But you may want to set a wake alarm timer on your device to power it back on, some UEFI computers can be configured to auto-power on from an S5 ACPI state with this function. I recommend you validate this first, otherwise you'd be putting this automation process to waste.

Hopefully, this all helps in setting up your own backup solution and potentially automating it in the process!